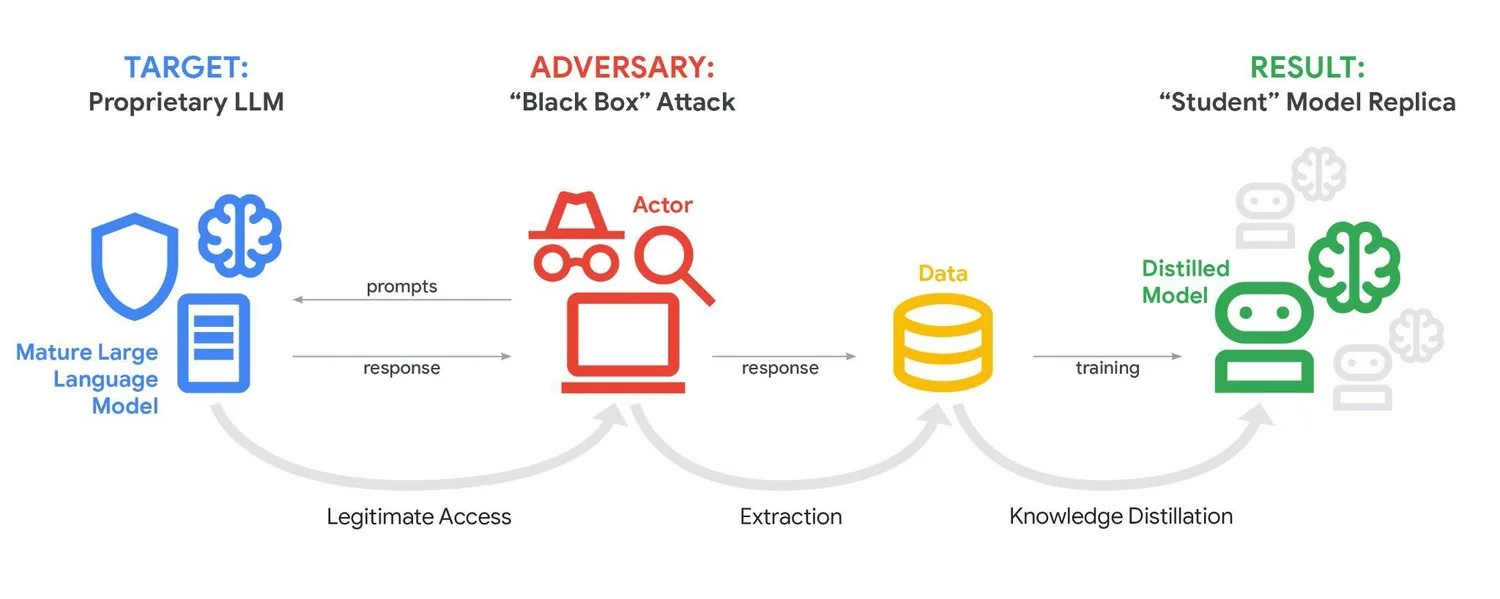

IT House reported on February 15 that on February 12, local time, Google said that its AI chatbot Gemini was experiencing a large number of "distillation attacks" – that is, inducing the chatbot to leak its internal mechanisms through repeated questions.

Google stated that these attackers tried to continuously test the output patterns and logic of its model by repeatedly asking questions, and probe its internal mechanisms, thereby "cloning" the model or strengthening the attacker's own AI system. One of the attacks prompted Gemini more than 100,000 times.

Google said in a report released on Thursday that the attacks were primarily carried out by "commercially motivated actors." The company determines that the people behind it are mostly AI private companies or research institutions hoping to gain a competitive advantage. A Google spokesperson told NBC News that the attack originated from multiple regions around the world, but declined to disclose more information about the suspected parties.

John Hultquist, chief analyst of Google's Threat Intelligence Group, pointed out that "the scale of the attack against Gemini indicates that such attacks are likely to have begun or will soon spread to the field of customized AI tools for small businesses." He described Google's situation as a "canary in the coal mine," meaning that the encounters of large platforms may indicate broader industry risks.

Google emphasizes that such distillation attacks are intellectual property theft. Technology companies have invested billions of dollars in developing AI chatbots (IT House Note: refers to large language models), and the internal mechanisms of their core models are regarded as highly important proprietary assets. Although major vendors have deployed mechanisms that can identify and block distillation attacks, mainstream large model services are still inherently vulnerable to attacks because they are open to everyone.

Google also mentioned that most attacks are designed to try to trick Gemini's "inference" algorithm, that is, its information processing decision-making mechanism. Holtquist warned that as more companies begin to train customized LLMs for internal business, and these models may contain sensitive data, the potential harm of distillation attacks will be greater. For example, he said that if a company's LLM learns its "100-year trading way of thinking," in theory, it may also be possible to gradually extract key knowledge secrets through distillation methods.