"The Ray framework aims to solve many challenges in distributed computing and provide developers with an efficient and flexible tool to build and run distributed applications in Python."

What is Ray?

In the context of today's rapidly changing technology, with the surge in data processing requirements and the emergence of complex computing tasks, traditional computing models are no longer able to support large-scale, high-efficiency computing requirements. Distributed computing technology is on the rise, and the Ray framework stands out. It is specially designed to solve distributed computing problems and creates an efficient and flexible distributed application development platform for Python developers. In recent years, with its excellent performance, high flexibility and user-friendliness, Ray has become the preferred solution for many developers, researchers, Internet companies and AI companies. Ray, this open source distributed computing framework, originated from the wisdom of the RAPID team at the University of California, Berkeley. Its core mission is to simplify the development process of distributed systems, especially for applications in the fields of machine learning and data science. Ray's design philosophy is to achieve the convenience of stand-alone development while ensuring seamless expansion to large-scale cluster environments.

Ray’s main features distributed computing capabilities

Ray gives developers the ability to parallelize Python code and make full use of multi-core processors and distributed computing resources. Its core components include:

· Task: The basic calculation unit, which can be any Python function.

· Scheduler: Responsible for dynamically scheduling the execution of tasks to ensure efficient utilization of resources.

Dynamic task scheduling

Ray's scheduler can flexibly manage task execution based on the real-time status of the system and resource availability. This means that developers do not need to pay attention to the specific execution order of tasks. Ray will automatically handle the dependencies between tasks, significantly improving development efficiency.

Actor model support

Ray's Actor model allows the creation of objects with persistent state – actors. Each actor maintains state during its life cycle and communicates with other actors through message passing. This design greatly simplifies the construction of complex applications, especially in concurrent processing and state sharing scenarios.

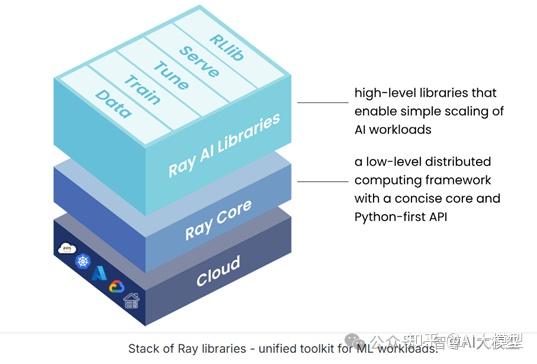

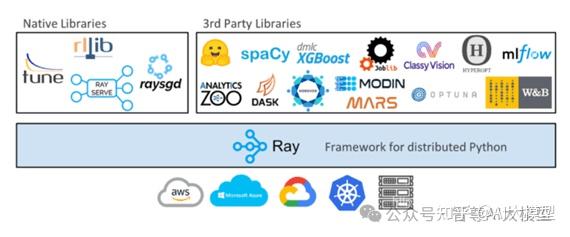

Strong ecosystem

Ray is not only a basic framework, but also equipped with a powerful tool library to meet diverse application scenarios:

· Ray Tune: A library focused on hyperparameter optimization that supports multiple optimization algorithms and can easily manage experiments.

· Ray Serve: used to deploy machine learning models as an extensible API, supporting version control and hot updates of models.

· Ray RLLib: A library designed specifically for reinforcement learning, providing a set of out-of-the-box algorithms and training tools to support distributed training.

User-friendly API and extensibility

Ray's API design is intuitive and easy to use, with Python syntax as the core, lowering the learning threshold. Its core concepts, such as tasks, actors, and schedulers, are implemented through concise function calls and classes, making it easy for developers to get started. Ray's architectural design supports seamless expansion from a single machine to a large cluster, flexibly responds to resource requirements of different scales, and is very suitable for production environments.

Ray application scenarios

The Ray framework has demonstrated excellence in many areas, especially in:

In recent years, Ray has made significant progress in simplifying the development and deployment of AI models and improving computing efficiency, becoming the framework of choice for many AI companies. The RLlib library provides strong support for reinforcement learning, including multiple algorithm implementations and efficient distributed training capabilities. Ray also provides a distributed computing framework for the expansion of large language models (LLMs), supports efficient model training and deployment, and helps developers quickly process language tasks. For applications that require training multiple models at the same time (such as time series prediction), Ray ensures that model training tasks can be executed in parallel and efficiently through efficient resource management and task scheduling. Ray Tune simplifies the hyperparameter tuning process, supports automated parameter search and experiment management, and helps developers find optimal model configurations. Through Ray Serve, developers can easily deploy machine learning models as high-performance, scalable services to meet real-time prediction and response needs. Using Ray, developers can scale batch inference tasks from a single GPU to a large cluster and accelerate the inference process by processing input data in parallel, which is particularly critical in scenarios such as image recognition and natural language processing.

Summary and outlook

The Ray framework provides developers with powerful and flexible tools to address distributed computing challenges. By simplifying the development of complex distributed systems, Ray not only improves development efficiency, but also supports the deployment and management of large-scale applications. As data science and machine learning continue to advance, Ray will continue to play a key role in promoting efficient computing and innovative applications. Overall, the Ray framework is a noteworthy and powerful tool, especially for developers who want to build high-performance distributed applications in Python. Whether in research, development or production environments, Ray can provide efficient and reliable solutions to help developers succeed.

Wonderful article

The most detailed interpretation of the Expert Hybrid Model (MoE) on the entire network: Expert and router large model data engineering practice: ArenaLearning builds a large-scale data flywheel by simulating the LLM arena. The Tsinghua team proposes an AI agent information navigation mechanism to make agent communication more effective.

Recommend good things