Faced with the strong rise of China's big models, the response of Silicon Valley in the United States is becoming increasingly fragmented.

Just a few days ago, the CEO of Anthropic, who has an anti-Huawei label, once again attacked Chinese models. Not only did he say that many Chinese open source model companies "distilled" Claude's data were suspected of plagiarism, he also belittled the fact that Chinese models achieved ranking scores by "fixing questions in response to benchmark tests."

But on the evening of March 2, when Alibaba launched four small-size models in its Qianwen Kaixin 3.5 series, Musk immediately left a hot comment on social media: "Impressive intelligence density."

On the surface, it seems to be a casual thumbs up from a technology geek, but in the eyes of industry insiders, Musk's reply to the term "intelligent density" is like a sharp knife, accurately piercing the "high performance, high premium" moat that American AI giants such as Anthropic have worked hard to manage.

From heap parameters to heap density

"Smart density" is a core concept that Musk has repeatedly advocated in the past year. It is a key indicator to measure the efficiency of large models.

Not only has he repeatedly stated that "the potential of AI intelligence density has been underestimated by two orders of magnitude," he has also publicly praised his Grok 5 for its 6 trillion parameters and higher intelligence density per GB.

Now he has given his most cherished concept to his competitors, which means that compared to traditional business exchanges, Musk sincerely agrees with this Chinese model.

On the same night, the AI entrepreneur on the other side of the Pacific who knew best about "settling accounts" gave the exact same answer.

MiniMax founder Yan Junjie also mentioned this keyword in his first financial report call after listing. He summarized the company's core strategy as "continuous improvement of smart density, coupled with Token's throughput capacity." He said: "What ultimately determines victory or defeat is not simply burning money and resources, but the speed of advancement in intelligent capabilities."

Two things collided on the same day and pointed to the same word. If you extend the timeline further, you will find that this is not a coincidence, but an industry consensus that is taking shape.

The main theme of the AI industry in the past three years has been an arms race: fighting for parameters, fighting for GPUs, and burning money. One trillion parameters are the threshold, one hundred thousand cards are the standard, and whoever has the larger number is the king. But the returns on this path are diminishing – if the model parameters are increased tenfold, the performance may only be improved by 20%, but the cost will increase more than tenfold.

Under the hustle and bustle, a "hidden thread" has already surfaced.

In November 2025, the research of the team of Professor Liu Zhiyuan of Tsinghua University appeared on the cover of "Nature Machine Intelligence" and formally proposed the "Densing Law" of large models. Based on rigorous backtesting of 51 mainstream large models, the paper reveals a surprising pattern: from 2023 to 2025, the intelligence density of large models will double every 3.5 months.

This means that every hundred days, humans can achieve the performance of the current optimal model with half the number of parameters.

Professor Liu Zhiyuan explained this matter very thoroughly: "The law of scale and the law of density are like the bright and dark lines of the evolution of large models. The same is true for the previous information revolution. The bright line is that devices are getting smaller and smaller, mainframe → minicomputer → personal computer → mobile phone; the dark line is the efficient evolution of the chip industry, which is Moore's Law."

IBM chief research scientist Kaoutar El Maghraoui's judgment is more straightforward: "2026 will be the year of the battle between cutting-edge models and efficient models." The industry is tired of the diminishing returns of simply stacking scale and is looking for new solutions.

So what we are seeing now is not just a few people saying the same word by chance. From academia to industry to competitors, a new consensus is converging: the weights and measures of the AI competition have changed. From comparing who is "bigger" to comparing who is "closer".

Small models can also be practical

But not everyone buys it.

Just a few days ago, Anthropic CEO Amodei publicly sang the opposite tune, saying that the Chinese model was optimized for benchmark testing far more than for real-world use.

Translation: The Chinese model is created by brushing the questions and does not count.

This statement has its own context, and it also exposes the deep anxiety of the leading American manufacturers. Every time a Chinese model proves that a small model can be used, the high-premium pricing model that Anthropic and OpenAI rely on to survive is in danger of collapse – if 9B's model can do the work of a model with ten times the number of parameters, the API profit pool carefully constructed by the giants will be drained instantly.

Then, Musk turned around and gave Qianwen a thumbs up.

There is a subtle game here: Musk is at loggerheads with OpenAI and Anthropic. To praise the Chinese model, to some extent, you are also knocking down your American counterparts – the rivals you say are not worth mentioning are challenging your pricing power in the way you fear most.

Of course, arguments are arguments, and the numbers given by the density law are cold: According to the statistics of the paper, the API call price of the GPT-3.5 model has dropped 266.7 times in just 20 months. This is not someone’s opinion, this is the law of technological deflation.

The debate can go on. The curve waits for no one.

The small model of Qianwen 3.5 is the latest data point on this density curve – and the one that most people dare not ignore.

Let me start with the most intuitive fact: a capability that required an entire server cluster a year ago is now installed on a mobile phone.

The performance of Qianwen 3.5 with 9B parameters is comparable to or even better than that of models with ten times the number of parameters on multiple benchmarks: GPQA Diamond score is 81.7, command following is 91.5, and visual understanding is significantly ahead of GPT-5-Nano of the same level on MMMU-Pro with 70.1 vs 57.2. The most exaggerated thing is that the 0.8B model with less than 1 billion parameters can process an ultra-long context of 260,000 tokens in one breath – equivalent to the size of two or three novels, running on an ordinary mobile phone instead of a hot server room.

The Qianwen team released 9 models in a row within 16 days, all Apache 2.0 models are completely open source, and each model is the king in its own parameter level. This is not a victory for one product, but "living evidence" of an entire density curve.

Professor Liu Zhiyuan has long foreseen this trend. He once asserted: “As long as some kind of intelligence can be achieved, it will definitely be able to run on smaller terminals in the future.”

Qianwen 3.5 is verifying this sentence.

When the 9B model can run ten times the performance of the number of parameters, the threshold for AI deployment has dropped from "a large company with a server cluster" to "an individual developer with a consumer-grade graphics card." When a company with a few hundred people can create a model that can compete with giants with thousands of people, the threshold for startups to enter has changed from "financing a billion dollars first" to "finding 300 smart people first."

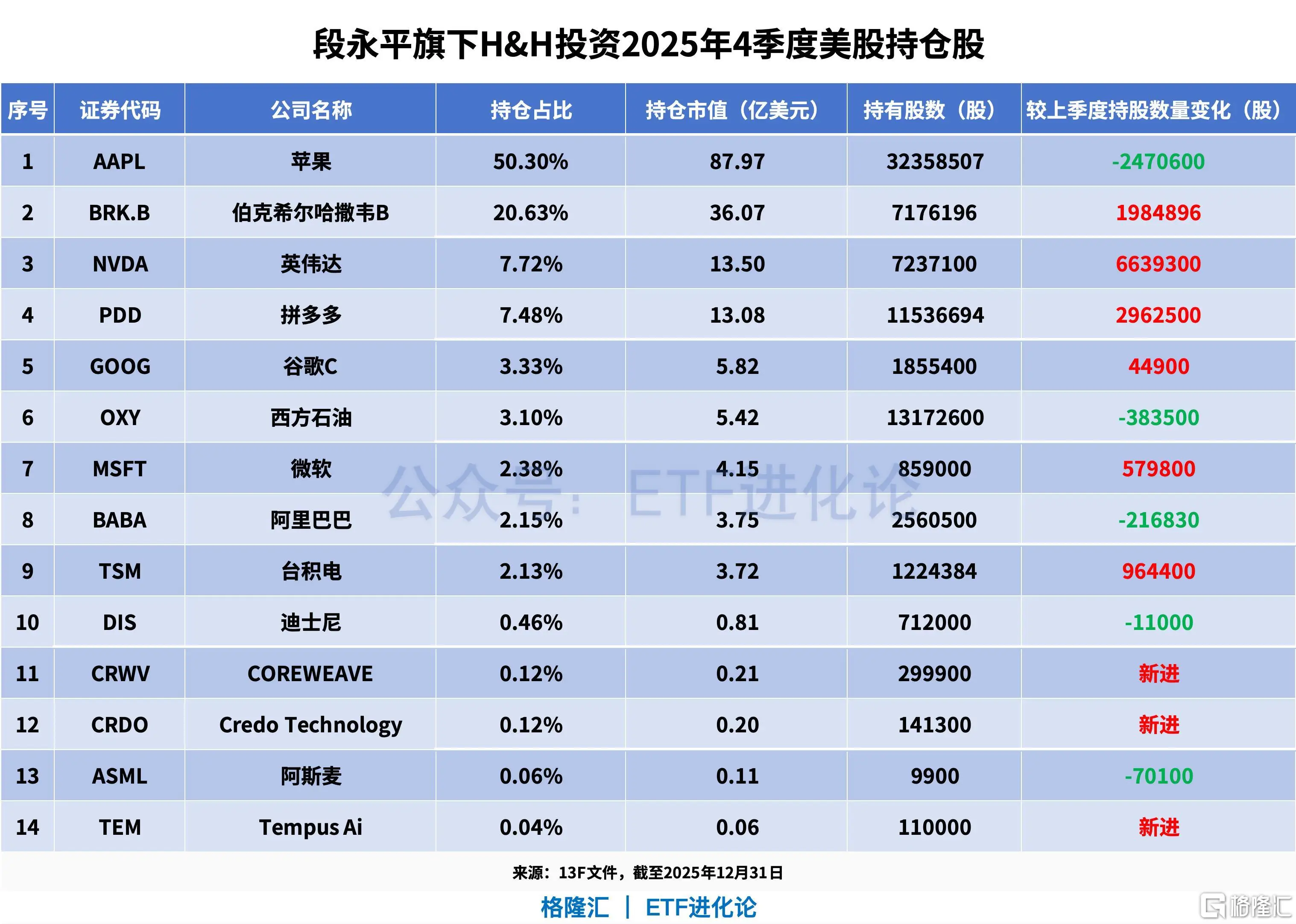

MiniMax is a footnote to the latter story. There are 385 people in the company, with an average age of 29. According to Yan Junjie's disclosure during the conference call, the company has spent only US$500 million since its establishment. Compared with OpenAI's thousands of employees and tens of billions of financing, it has delivered a revenue growth of 159% and a gross profit growth of 437%. Intelligent density is not only a physical concept of model parameters, but also an extremely powerful organizational lever.

Don’t underestimate the ocean of personal devices. In the early days of the information revolution, someone predicted that "the world only needs a few large computers." But today, there are more than 7 billion mobile phones in the world. Liu Zhiyuan once calculated an account: As early as 2023, the total end-side computing power scattered on tens of millions of devices across the country is already 12 times that of data centers.

The ultimate outcome of intelligence is destined to be distributed.

This is why "intelligent density" is more important than any single running score. What it points to is not "whose model is stronger today", but "in what form and at what low cost will AI eventually come to every ordinary person".

Back to Musk’s “Impressive intelligence density”. Rather than praising a certain launch by a Chinese company, he is confirming a turning point that is taking place:

The second half of AI does not belong to the largest models, but to the most intensive wisdom.

![[Netherlands] France Turns To The Netherlands To Support Its Military Operations-Lijin Finance](https://www.erbetc.com/wp-content/uploads/2026/03/1773277663261_0.webp)