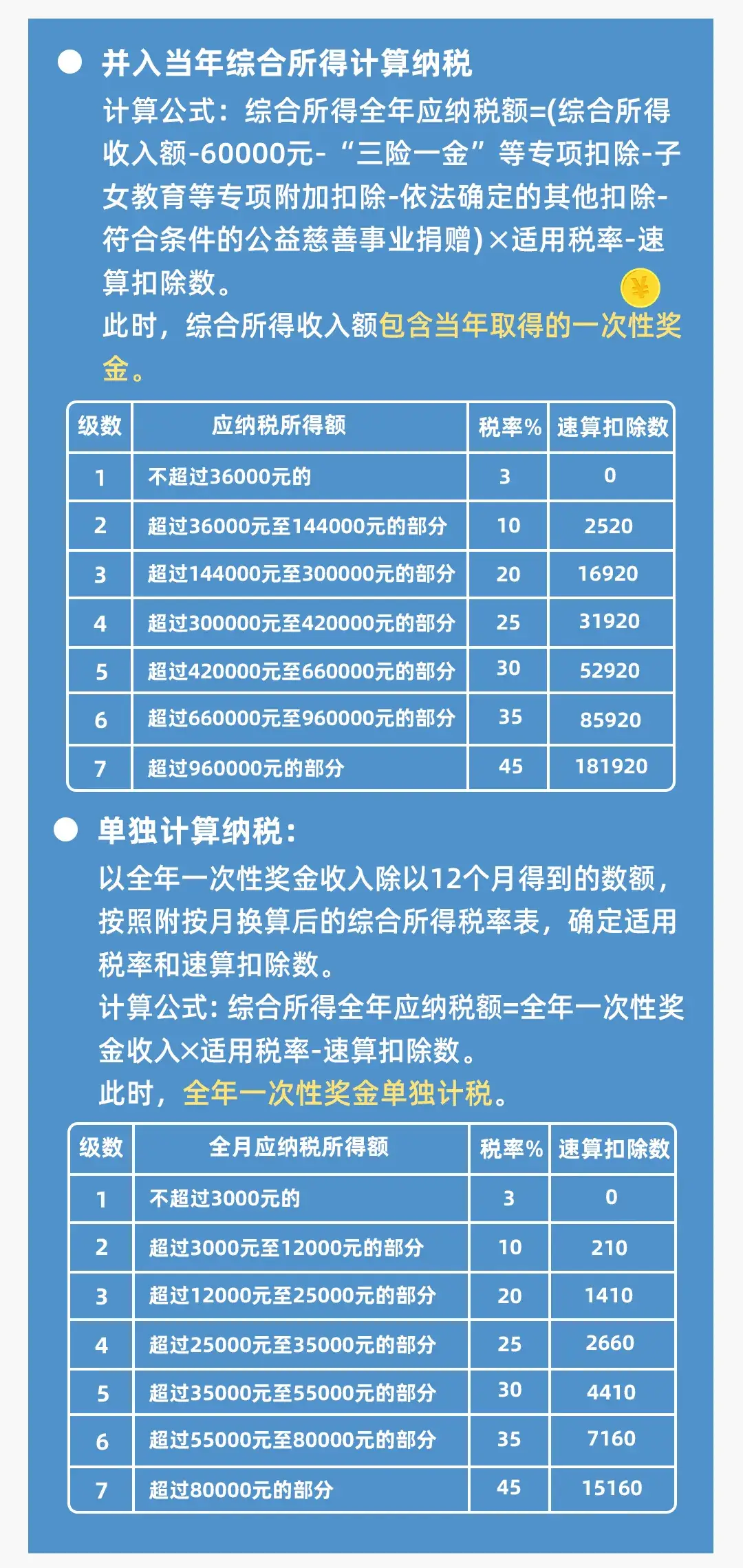

At the just-concluded Huawei China Partner Conference 2026, Ma Haixu, Huawei Vice President and President of ICT Product Portfolio Management and Solutions Department, announced that the Atlas 350 accelerator card is officially launched for sale. The card is equipped with the Ascend 950PR processor. This also marks that after Huawei first introduced the Ascend 950PR last year, the chip was launched as scheduled.

Zhang Dixuan, President of Huawei’s Ascend Computing Business, introduced that Atlas The single-card computing power of the 350 is 2.87 times that of the NVIDIA H20, and it is currently the only inference product in China that supports FP4 low precision. Secondly, in terms of memory, its HBM capacity is 1.16 times that of the H20, reaching 112GB, and the multi-modal generation speed can be increased by 60%. Thirdly, the memory access granularity is reduced from 512 bytes to 128 bytes, and the memory access efficiency of small operators is increased by 4 times.

What does it mean to support FP4 low precision? Observer.com found out that the H200 that Nvidia now wants to sell in China does not support native FP4, and only the more advanced Blackwell has introduced it. Supporting FP4 is essentially the ultimate inference solution that trades precision for efficiency, which means that a 70B parameter model requires only 35GB of video memory, can be loaded on a single card, and the inference delay is greatly reduced, while FP16 requires 140GB of video memory.

At the scene, seven core partners, Kunlun, Huakun Zhenyu, China Kuntai, Yangtze Computing, Boyd, iSoftStone Huafang, and Baixin, launched complete products based on Atlas 350, marking the official entry of the Ascend 950 intergenerational inference computing power into the commercial stage. iFlytek also stated that the new generation of Spark large models will be adapted to the Ascend 910/950 series computing power base.

Atlas 350 accelerator card source: Observer.com

Observer.com saw at the booth that the Atlas 350 has an FP4 precision computing power of 1.56P, a bandwidth of 1.4TB/s, and a power consumption of 600W, which is 1.5 times that of the H20.

Huawei introduced at the Full Connection Conference last year that the Ascend 950 series is divided into Ascend 950PR and Ascend 950DT. The former is mainly for prefill and recommendation scenarios. It uses Huawei's self-developed low-cost HBM, HiBL 1.0. Compared with the high-performance and high-price HBM3e/4e, it can greatly reduce the investment in the prefill stage of inference and recommendation services.

From the perspective of single card indicators, it should not be a problem for the Ascend 950PR to compete with the NVIDIA H20. However, there is still a certain gap with the H200 in terms of FP8/FP16 computing power and memory bandwidth. Its power consumption indicator of 600W is also very close to the 700W of the H200.

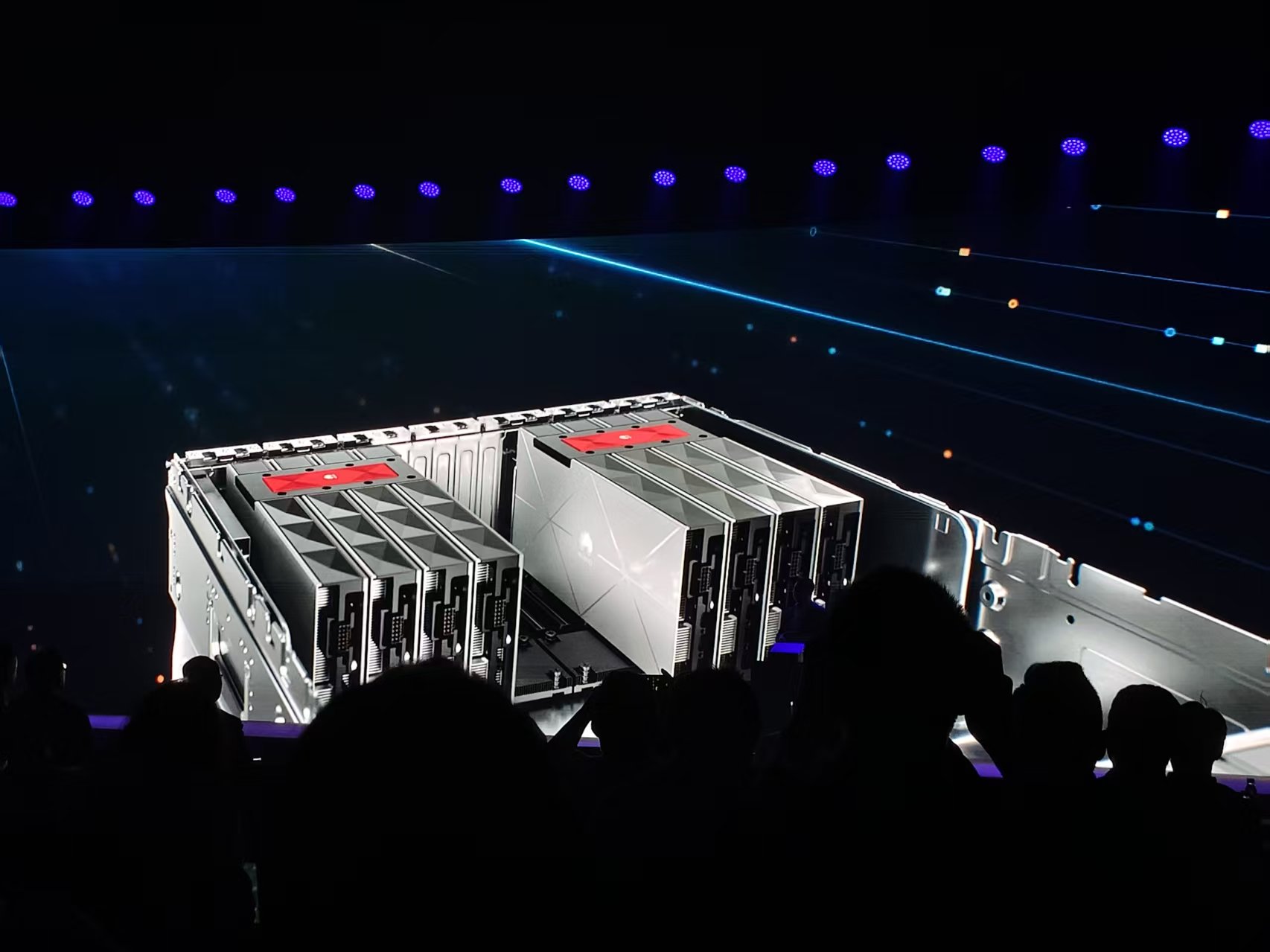

However, in training scenarios, as the scale of model parameters becomes larger and larger, the significance of comparing the performance indicators of single cards has been limited. Huawei has also proposed a super-node strategy. At this year's Pakistan Exhibition, Huawei demonstrated the Atlas 950 super node to the world. It supports up to 8192 Ascend 950DT cards through the "Lingqu" all-optical interconnection. Even compared with the NVL576 that NVIDIA plans to launch in 2027, the Atlas 950 super node still has advantages in all aspects.

At the Ascend Artificial Intelligence Partner Summit of this partner conference, Zheng Weimin, professor of the Department of Computer Science at Tsinghua University and Ascend Honorary Advisor, said that super nodes have become a key force in promoting the development of AI technology and are gradually becoming the new normal in AI infrastructure construction with their core features such as ultra-high bandwidth, ultra-low latency, and unified memory addressing.

He also mentioned that super node technology has been applied in the Internet, telecommunications, manufacturing and other industries. Practice has proven that only super nodes with unified memory addressing capabilities can truly achieve Scale-Up expansion of computing power. Super Point gives China’s computing power the ability to support world-class large models, and promotes my country’s AI computing power to move from technology following to architecture leadership.

Source: Observer.com

Technological breakthroughs are only the first step. The real challenge lies in the sustainable development and construction of the ecosystem. Ma Haixu said at the meeting that on August 5, 2025, Huawei officially made it clear that all Shengteng software will be open source. So far, CANN and other software have completed architectural decoupling, and the installation packages have been split from 8 to 29, allowing developers to use them on demand, and compilation efficiency has increased by 58%.

"We will also support and contribute to the three-party open source ecosystem throughout the entire process, from the operator programming framework Trion to the AI framework PyTorch, to the training and acceleration engines FSDP, vLLM, etc. We have currently supported more than 50 three-party open source communities and projects, contributing It has more than 650 key features. While matching the usage habits of partner developers, we will better implement innovations based on Ascend. This year, we will continue to improve the usability of the software and further optimize the out-of-box performance, moving from ease of use to comprehensive usability,” he said in his speech.

In order to demonstrate the ease of use of Shengteng, Zhang Dixuan also mentioned the example of wisdom spectrum. He said that based on Shengteng, Zhipu completed the training of the multi-modal large model GLM-Image in three months. This model innovatively adopts a hybrid architecture of autoregression and diffusion. Within less than 24 hours after being open sourced, it topped the Trending list of Hugging Face, the world's largest open source community, proving that Ascend can train world-leading large models.

At present, artificial intelligence is rapidly integrated into everyone’s work and life. During the Spring Festival this year, a new model was released every 1.5 days on average, and the model capabilities are getting stronger and stronger. For example, Seedance2.0 can provide professional-level video generation; at the application level, OpenClaw detonated the development of global Agentic AI, realizing the transformation of AI applications from "understanding and suggestion" to "perception and execution". In just a few weeks, it has almost surpassed the achievements of Linux in thirty years, and has become the most popular open source project, driving the rapid growth of AI computing power demand.

Source: Observer.com

However, from the perspective of fragmented scenarios, not every enterprise needs a giant computing power system. For large model training with trillions of parameters, 384 cards, 768 cards, or even larger scale may be needed. For larger enterprises, 8 cards meet basic reasoning and small-scale training, with controllable costs and simple operation and maintenance; 64 cards break through performance bottlenecks and are suitable for medium and large-scale training. The cost is much lower than hundreds of cards or kilocards, and the difficulty of operation and maintenance is also within an affordable range.

Huawei has also noticed the demand for more computing power levels. Zhang Dixuan said that Ascend products have implemented hierarchical upgrades for large models of different sizes: A2 standard cards are launched for tens of billions of models, with a memory bandwidth that is 1.8 times the industry's; stand-alone servers are provided for hundreds of billions of models, with computing power that is 2.3 times that of the industry; dual-machine super node servers are used for trillions of models, and T-class models can be deployed with Lingqu direct connection, and the computing power of the entire machine is 3.78 times that of the industry.

At present, "shrimp farming" is becoming a craze, which has once again stimulated the demand for all-in-one machines. Ma Haixu and others revealed at the meeting that in the past month or so, more than a dozen partners have launched Ascend-based Claw all-in-one machines, supporting more than 100 customers to complete the development of Agent applications based on openClaw. Up to now, Shengteng has cooperated with partners to build more than 400 industry all-in-one machines, serving more than 2,700 customers, occupying more than 80% of the domestic all-in-one machine market share.

Technological advancement and ecological maturity ultimately need to be verified by the market. According to Bernstein Research's forecast, in terms of revenue, Huawei's share of China's AI accelerator market is expected to increase to 50% in 2026, NVIDIA may drop to 8% due to product bans, AMD will rise to 12%, Haiguang will rise to 8%, Cambrian will rise to 9%, and Moore Thread, Kunlun Core, Muxi Technology and Biren Technology will be in the 1%-3% position.