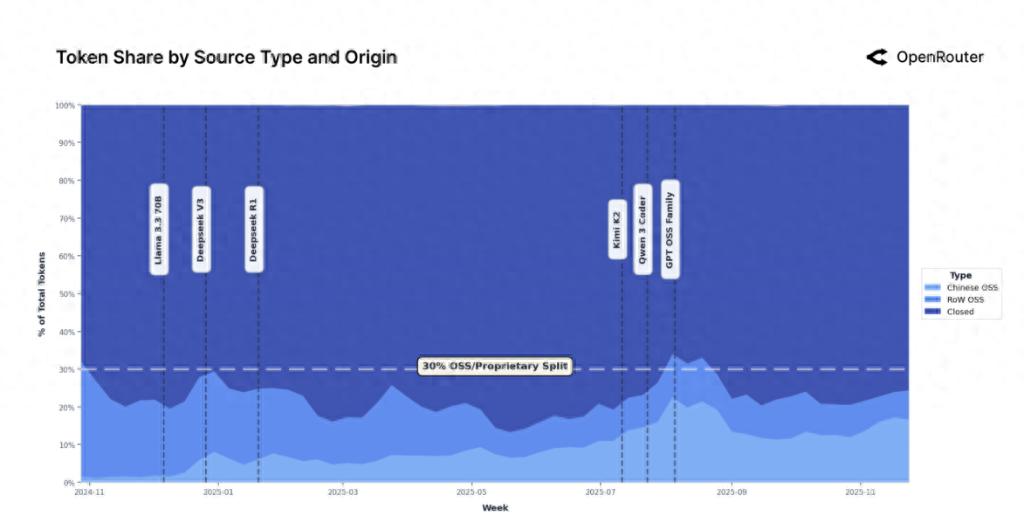

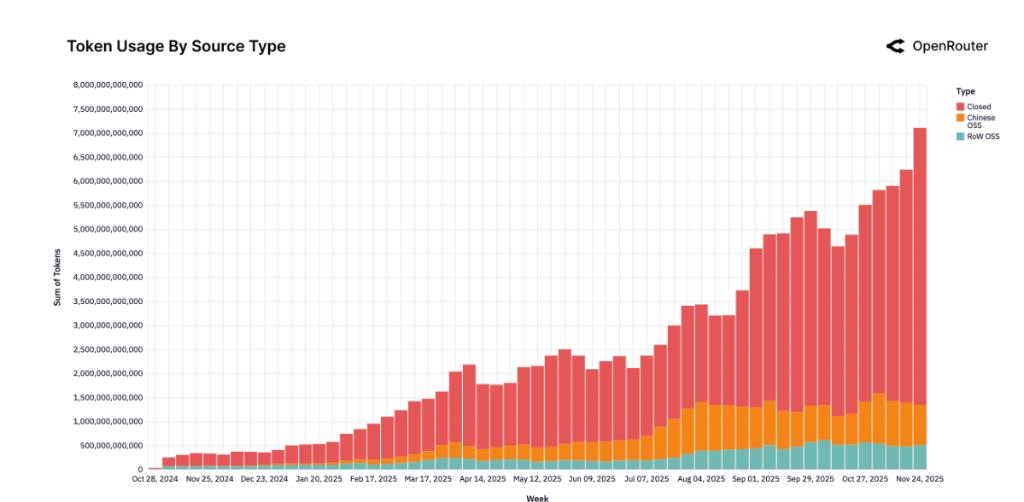

Empirical research based on the OpenRouter platform's over 100 trillion Tokens shows that the large-scale language model market is undergoing profound reconstruction. The share of open source models has climbed to 33%, completely breaking the monopoly of closed source models. The market structure has shifted from DeepSeek's "one company" to diversified competition. China's open source AI has risen strongly in this change and has officially become the first echelon in the world.

On December 4, a16z, a well-known Silicon Valley venture capital firm, and OpenRouter, a large model API platform, stated in a report co-authored that the core driving force of this change comes from the explosive growth of Chinese models. Data shows that the market share of open source models developed in China has soared from 1.2% at the end of 2024 to a peak of nearly 30% in mid-2025, with an average annual share of 13.0%, which is almost the same as the 13.7% share of open source models in other parts of the world. Chinese models such as Qwen, DeepSeek, and MoonshotAI have achieved the leap from fringe participants to core players by virtue of their technical capabilities and local adaptation advantages.

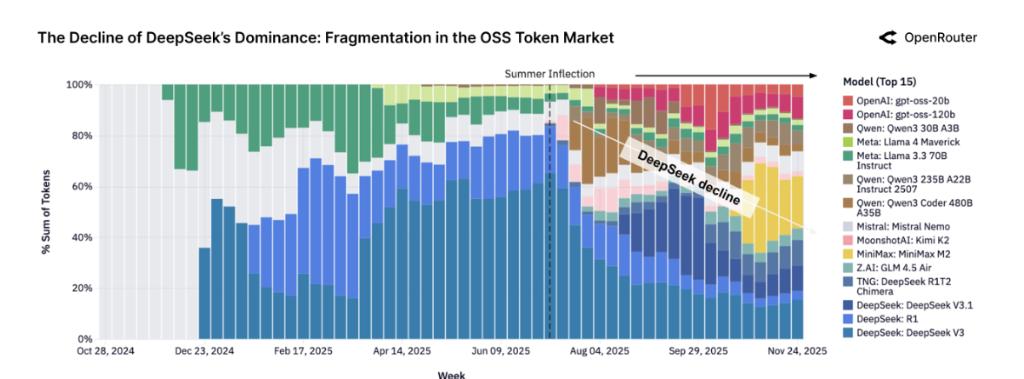

The report points out that the competitive landscape within the open source ecosystem has undergone drastic changes simultaneously. After the "summer turning point" in mid-2025, the market has rapidly moved from being highly concentrated, with the DeepSeek family accounting for more than 50% of the market share, to fragmented competition. By the end of 2025, no single model can continue to occupy more than 25% of the market share, and user selection logic has changed from locking the "best model" to flexibly combining among 5-7 top models.

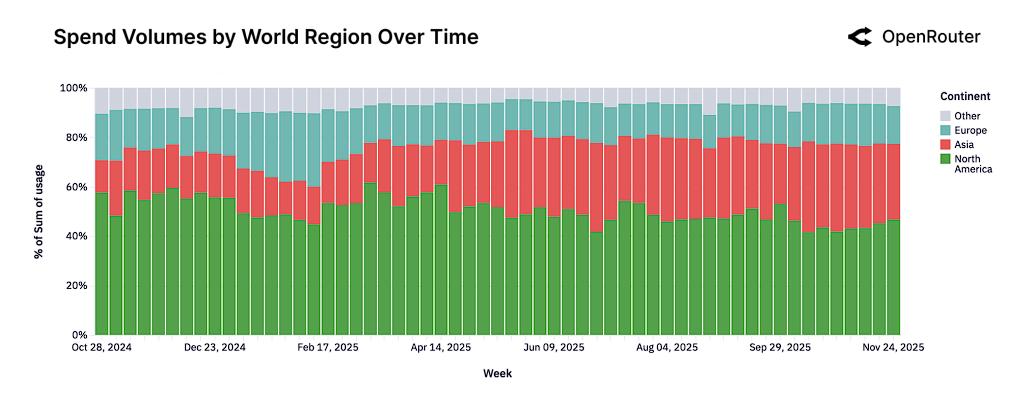

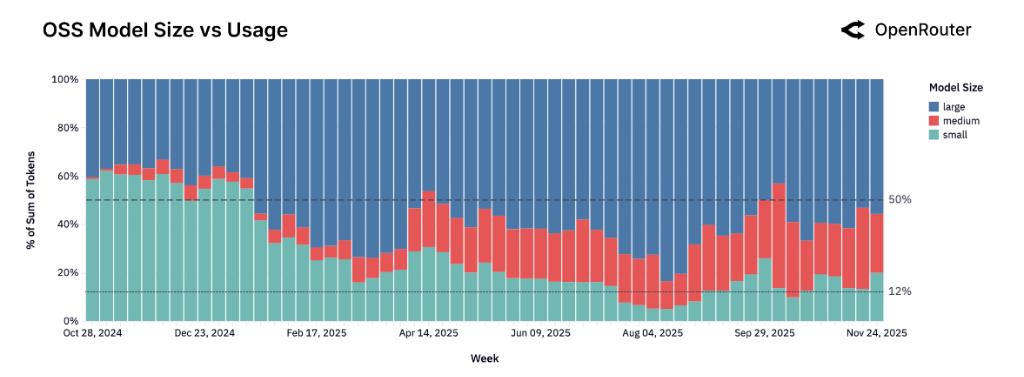

In addition, the research report reveals a number of disruptive trends: medium-sized models (15B-70B) are replacing small models as the mainstream, agent reasoning capabilities surpass text generation and become the core value, the proportion of programming applications has soared from 11% to over 50%, and the Asian market spending share has doubled from 13% to 31%. The rules of competition have shifted from leaderboard scores to real-world usage retention and workload matching capabilities.

China’s power reshapes open source landscape

The report states that the open source model market has formed a dual-track structure in which "closed source defines the upper limit of performance and open source provides multiple values". By the end of 2025, the market share of open source models has steadily climbed to 33%. This growth is not a short-term boom, but driven by the continuous iteration of high-quality models such as DeepSeek V3 and Kimi K2.

The rise of China’s open source model is faster than expected. At the end of 2024, China's model market share was only 1.2%, and by mid-2025, its peak had reached nearly 30%. Chinese models such as Qwen, DeepSeek, and MoonshotAI have demonstrated unique advantages in technical capabilities and localization adaptation, marking that Chinese AI has officially entered the first echelon of the world in the open source track.

From the perspective of global regional distribution, the overall rise of the Asian market is the most significant, with the share of global spending doubling from 13% at the beginning of the study to 31%, becoming a key growth engine. Although North America is still the largest single region, its spending share has been below 50% for a long time.

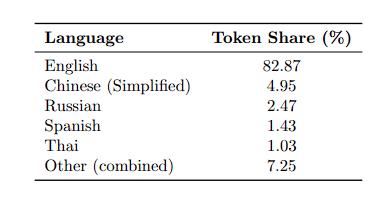

Language distribution data shows that Simplified Chinese has become the second largest language after English with a share of 4.95%, reflecting the strong demand in the Chinese market.

From monopoly to multi-power co-governance

According to reports, the open source market at the end of 2024 showed a highly concentrated pattern. The V3 and R1 models of the DeepSeek family together accounted for more than 50% of Token usage, almost forming a "one dominant" situation. But this pattern was completely overturned after the "summer turning point" in mid-2025.

With the intensive release of new models such as Qwen, Minimax, Kimi K2, and GPT-OSS series, competition barriers in the open source market have been broken. These new models are ready for large-scale production use within weeks of release. By the end of 2025, no single model will continue to occupy more than 25% of the open source market share.

User behavior patterns have undergone fundamental changes. Developers have shifted from locking the "best model" by default to diversifying combinations among 5-7 top models. This change marks that the open source ecosystem has officially entered the stage of full competition where "the dominant players are divided", and multi-model ecosystem has become the norm in the industry.

"Medium is the new small" subverts size perception

The empirical data of over 100 billion Tokens completely overturns the traditional perception that "the open source ecosystem is dominated by small and lightweight models". Data shows that developers are using practical actions to reshape the model size pattern.

small model (

In contrast, medium-sized models (15B-70B) have achieved explosive growth from scratch, and medium-sized models represented by Qwen2.5 Coder 32B have quickly built a fiercely competitive ecosystem.

This type of model accurately matches users' needs for "the balance point between capabilities and efficiency" and has become the core growth pole of the open source market, confirming the new industry consensus that "medium-sized is the new small".

The field of large models (>70B) also presents a diversified competition. Models such as Qwen3 235B and Z.AI GLM 4.5 have become core benchmark targets. Users tend to flexibly switch between multiple top large models.

Chinese characteristics of application scenarios

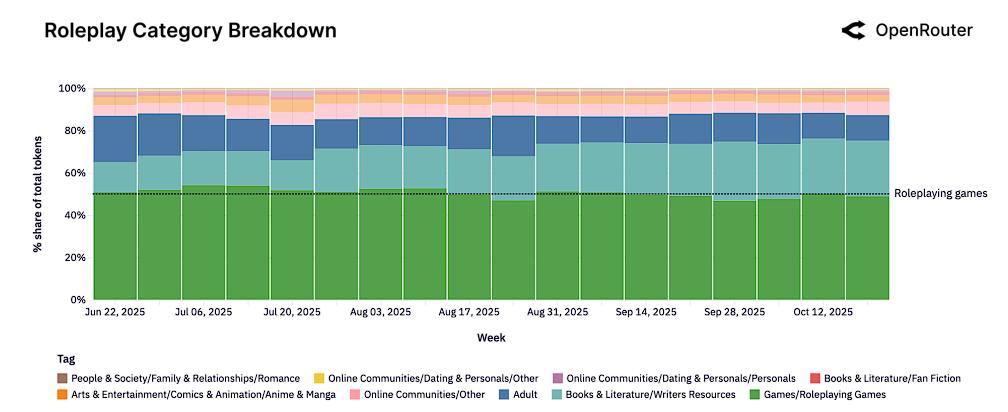

Looking at the overall task distribution of the open source model, role-playing has become the largest application with over 50% of the tokens, benefiting from the natural advantage of the open source model having less content restrictions. Programming assistance ranks second with a share of 15%-20%, and its share continues to grow.

However, China’s open source model shows significant differentiation characteristics. Different from the "role-playing dominance" of the global market, the total proportion of programming and technology applications in China's open source model reaches 39%, exceeding the role-playing share of 33%.

This difference shows that China’s open source models have the ability to directly compete with world-class models in productivity areas such as code generation and technical reasoning. Its value is more focused on professional efficiency improvement rather than entertainment interaction. This positioning may open up unique competitive advantages for Chinese models in the enterprise market.

Agent reasoning leads paradigm shift

The most disruptive finding revealed by the research is a fundamental paradigm shift in the way LLM is used—from a single round of text completion to a multi-step, tool-integrated agent reasoning workflow.

The amount of tokens processed by models optimized for inference has soared from almost negligible in early 2025 to accounting for more than 50% of total usage. This change is driven by supply and demand in both directions:

On the supply side, the release of models such as GPT-5 and Claude 4.5 has greatly increased the upper limit of reasoning capabilities; on the demand side, users are increasingly favoring models that can manage task status, follow multi-step logic, and support intelligent workflows.

Accompanying the rise of intelligent agent reasoning are two key features:

The prompt length has increased dramatically, and the average number of input tokens per request has increased nearly 4 times from 1.5K to more than 6K. Among them, the programming task prompt length exceeds 20K, which is 3-4 times that of other categories;

Tool calls are becoming increasingly popular, and models such as Claude 4.5 Sonnet and Grok Code Fast are leading the way, marking the essential transformation of LLM from "text generator" to "action executor".

"Crystal slipper effect" defines new moat

The study discovered a group of "founding user groups" with ultra-high long-term retention, and proposed a "Cinderella's glass slipper effect" framework to explain this phenomenon, defining the core moat in the AI era.

The core logic of this framework is: there are always unsatisfied high-value "workloads" in the market; each new generation model release is a matching process of "trying on the glass slipper"; when the model perfectly solves the technical and economic constraints of a specific workload for the first time, users will build processes and data pipelines around the model, resulting in extremely high switching costs and stickiness.

The data confirms this logic: the retention rate of the early foundation user groups of Claude 4 Sonnet and Gemini 2.5Pro still reaches 40% after 5 months, while the retention performance of all user groups of models that have not achieved matching, such as Llama 4 Maverick, is extremely poor. In addition, the DeepSeek model also exhibits a unique "boomerang effect", whereby some lost users will return again after trying other models.

This discovery reveals that the real competitive barriers come from the first matching of "workload-model" and the resulting highly sticky user base. Retention is far more critical than growth. The industry focus is shifting from the slight advantage of rankings to empirical analysis and operational optimization of real-world use, from single-model competition to multi-model flexible strategies. Open source and closed source, East and West will coexist and compete for a long time.